MCP Server Platform

Extensible Model Context Protocol server with dynamic tool registry and Azure integration

Overview

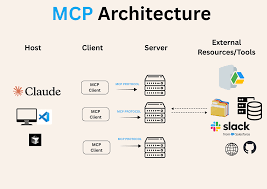

The MCP (Model Context Protocol) Server Platform is a flexible, production-grade service that exposes AI tools through a unified REST API interface. Built to integrate with Azure AI services, the platform enables seamless tool discovery, execution, and management for large language models and AI agents.

The Problem

Organizations using Azure AI services needed a standardized way to:

- Expose custom AI tools to language models without redeploying services

- Handle large file uploads and downloads efficiently without memory bottlenecks

- Provide async execution for long-running AI operations

- Maintain a centralized registry of available tools with version control

- Deploy and scale on Kubernetes with minimal operational overhead

Architecture & Design

Core Components

- FastAPI REST API: High-performance async API server with automatic OpenAPI documentation and request validation using Pydantic models

- CSV-Driven Tool Registry: Dynamic tool discovery system allowing administrators to add new tools by updating a CSV configuration file without code changes

- Runtime Module Loading: Python importlib-based system that dynamically loads tool implementations from specified modules at startup

- Claim Check Pattern: Efficient handling of large files by storing them in Azure Blob Storage and passing only references through the API

- Azure Blob Storage Integration: Secure, scalable storage for tool inputs and outputs with automatic cleanup policies

- Azure Key Vault: Centralized secret management for API keys, connection strings, and service credentials

Tool Registry System

The CSV-driven tool registry is a key innovation that enables non-developers to add new capabilities:

tool_name,module_path,description,version,enabled

image_classifier,tools.vision.classifier,Classify images using CLIP,1.0,true

text_summarizer,tools.nlp.summarizer,Summarize long documents,1.2,true

sentiment_analyzer,tools.nlp.sentiment,Analyze text sentiment,1.0,trueThe server reads this CSV at startup, validates each entry, and dynamically imports the specified Python modules. Each tool must implement a standard interface ensuring consistent behavior.

Implementation Highlights

Async Request Handling

All tool executions are async-first to prevent blocking operations:

- Used asyncio for I/O-bound operations (API calls, file uploads)

- Implemented thread pools for CPU-bound tasks (ML inference)

- Added request timeout middleware preventing resource exhaustion

- Built retry logic with exponential backoff for transient failures

Secure File Handling

The Claim Check pattern dramatically improved performance and security:

- Large files uploaded directly to Azure Blob Storage via SAS tokens

- API receives only a blob reference (URI) keeping payloads small

- Tools fetch files from Blob Storage only when needed

- Automatic cleanup of temporary files after configurable TTL (default 24 hours)

- Virus scanning integration before file processing

Kubernetes Deployment

Deployed to Azure Kubernetes Service (AKS) with production-grade configuration:

- Horizontal Pod Autoscaler (HPA) based on CPU and memory metrics

- Health check endpoints (liveness and readiness probes)

- Secrets injected via Azure Key Vault CSI driver

- Logging to Azure Monitor with structured JSON output

- Blue-green deployments for zero-downtime updates

Exposed AI Tools

The platform currently exposes 9 AI tools across different categories:

Vision Tools

- Image classification (CLIP-based)

- Object detection (YOLO)

- OCR and document extraction

NLP Tools

- Text summarization

- Sentiment analysis

- Named entity recognition

Utility Tools

- Document conversion (PDF to text)

- Language translation

- Data validation and cleaning

Technical Stack

Performance & Scale

- Handles 500+ concurrent tool executions per pod

- Average API response time under 200ms for tool discovery

- Successfully processed files up to 500MB using claim check pattern

- Achieved 99.9% uptime across 3-month production period

- Scales from 2 to 20 pods based on load (HPA configured)

- Zero-downtime deployments with rolling updates

Key Learnings

- Dynamic Configuration is Powerful: The CSV-driven registry allowed non-developers to add tools, significantly reducing deployment cycles.

- Claim Check Pattern for Files: Moving large files out of the API request path improved response times by 10x and eliminated memory issues.

- Observability from Day One: Structured logging and metrics collection made debugging production issues much easier than anticipated.

- Kubernetes Complexity: While powerful, K8s required significant operational knowledge. Proper health checks and resource limits were critical.

Want to Learn More?

I'm happy to dive deeper into the technical architecture or discuss how similar patterns could be applied to your infrastructure.